The creation of knowledge, what AI can (and can’t) do, and how I’m putting skin in the game

March 2025

I’ve spent the past two years getting a PhD in AI and robotics. Deep in the trenches of research, I’ve learned how AI works, where it’s useful, and where it isn’t. So in this post, I’ll talk about the areas where AI will definitely change things beyond recognition-and where I don’t think it will. I’ll describe my mental model of knowledge and scientific discovery, where AI and robotics fit into it, and where I see myself spending time, energy, and money in the next couple of years.

What this is: An exploration of how I understand knowledge and scientific discovery—and where AI and robots fit into it. Also a brief outline of some of the challenges of deploying AI to real-life and how I’m approaching them.

What this is not: Investment advice. I also won’t get into company fundamentals or talk about math. This is all based on vibes. This is also not a complete analysis of everything AI-related. There are plenty of use cases I won’t cover because they don’t interest me (e.g., recommender algorithms) or I don’t know much about them (e.g., video/image gen).

Now, let’s start with my model of human knowledge.

The Geometry of Knowledge

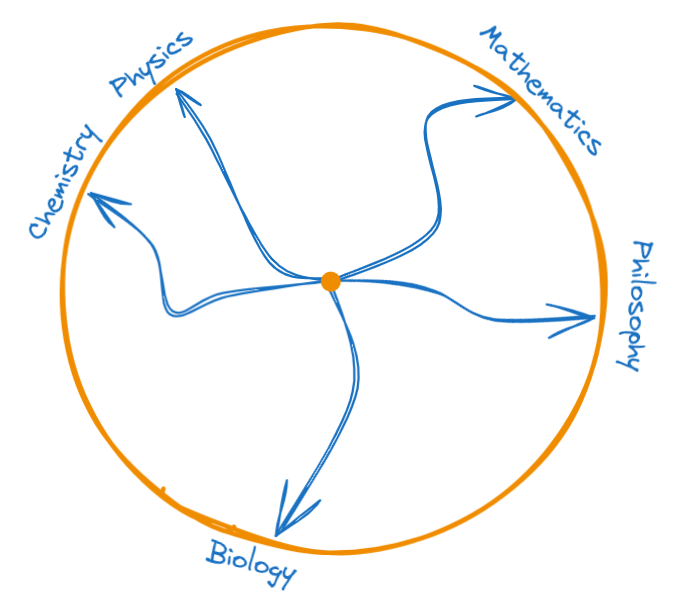

Why Everyone is an NPC and Machines of Loving Grace

I like to think of humanity’s knowledge as a circle containing everything we’ve discovered or created. Everyone starts in the center, knowing almost nothing. Over time, through school, work, or real-life experiences, people get closer to the edges. The edge represents the cutting edge of what we know. Some get good at chemistry or mathematics. Some become expert painters or ballet dancers. Some are born into power and learn about politics, power, and human nature. Make it to the edge, and you’re bound to be around some interesting ideas (and people!).

Few ever reach the edge. One reason is that education (assuming you even get one) isn’t set up for making people world-class at anything. It’s more of a one-size-fits-all treatment focused on pattern-matching, not on cultivating curiosity and individual strengths.

Another reason is that most jobs don’t incentivize creativity or knowledge creation. Sadly, most jobs are repetitive and boring. Some people are fine with that. They have a role, they’re given instructions, and they follow them. They are like non-playable characters (NPCs) in a videogame. And look, I don’t blame them. Structure is a comforting handhold when life is uncertain. Only few ever snap out of the Matrix, think critically, and create things that weren’t there before.

Reaching the edge is so much about luck, too. You might be born with the biggest brain in the world, as The One, and find yourself part of an uncontacted tribe in the Amazon Rainforest. And it’s going to be very hard to push the boundaries of human knowledge if you can’t build on what’s already there.

Luck isn’t just about access to books, the internet, or other resources. You also need a mentor who has already made it to the edge and can boost you over it. Warren Buffett had Benjamin Graham. Ramanujan had G.H. Hardy. Alexander the Great had Aristotle. And for most of history, the probability of having access to a smart person and also getting their mentorship was astronomically low.

AI means good mentorship is no longer a matter of luck. As this technology becomes more accessible, everyone will have their own personal Aristotle. A mentor that understands their limitations and pushes them toward growth. A friend that never tires, never forgets, always listens, and is (literally) a living repository of all of humanity’s knowledge. A machine of loving grace.

If we play this right, these machines will guide us to the bleeding edge of human knowledge (and personal potential). I don’t know what happens when billions of people suddenly gain access to a lifestyle once limited to a lucky few. But I’m certain that letting people develop their creative potential—at scale—will take us in a direction unlike anything we’ve ever seen. What a time to be alive!

Here are a couple companies that are working on this that I think are on the right track:

- Elysian Labs by near

- Friend by Avi Schiffmann

- Eureka Labs by Andrej Karpathy

- Khanmigo by the one and only Sal Khan

Divine Inspiration

You made it to the boundary of human knowledge, now what?

You get to choose how to push it forward.

That means inventing new chemistry, creating a new art style, or connecting two unrelated mathematical fields.

Nobody really knows how these creative leaps happen, but I think they come in two flavors:

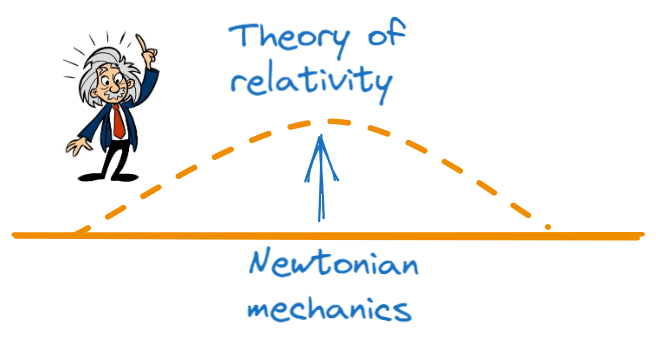

Divine inspiration, conjectures, and hunches. It’s when we try something weird. When you come up with an idea that certainly relies on understanding the current paradigm, but pushes things in a significantly different direction. Examples are quaternions, Gödel’s incompleteness theorems, or the theory of relativity.

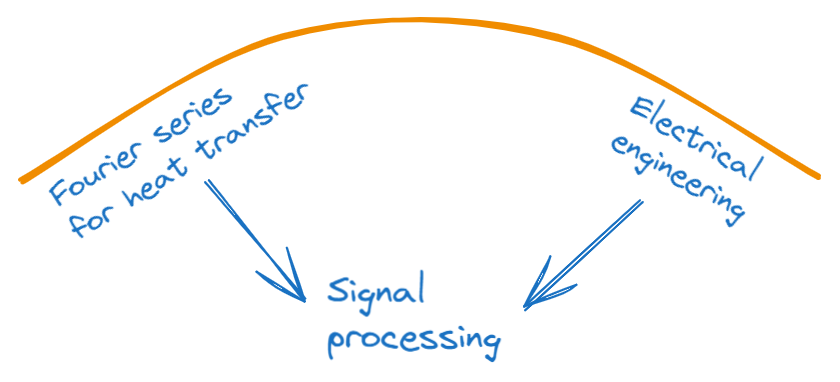

Connections between two fields. I think of this one as less about pushing the boundary of knowledge and more about filling in a hole in what we already knew. Examples of this are Galois realizing that he could reason about polynomials using group theory. Another is electrical engineers figuring out that Fourier series (initially developed to study heat transfer) were also useful for signal analysis. The material was already there, someone just had to make the connection.

I think that the current form of AI (i.e., neural networks) is uniquely suited for making connections (#2), but isn’t really setup to channel any divine inspiration (#1). This may be an unpopular opinion in the age of scaling laws and trillion dollar AI clusters. Some people think artificial general intelligence is just a bigger computer away.

But my opinion is a first-principles analysis: neural networks, after all, interpolate. They learn from data, recognize patterns, and fill in gaps. And they certainly perform very well when fed massive amounts of data. But, in general, they struggle outside their training distribution. And I don’t think things like scaling test-time inference change the fundamental interpolative nature of neural networks: sometimes filling in gaps in your training data takes a lot more steps. But you’re still “only” filling in a gap.

Now don’t get me wrong: if there’s an answer to the question of how to build artificial general intelligence, I would feel safe betting that it will probably involve neural networks. I’m just being realistic about where AI will be most useful. In its current form, AI is great at patching holes in human knowledge. But I don’t think it will take us to the singularity.1

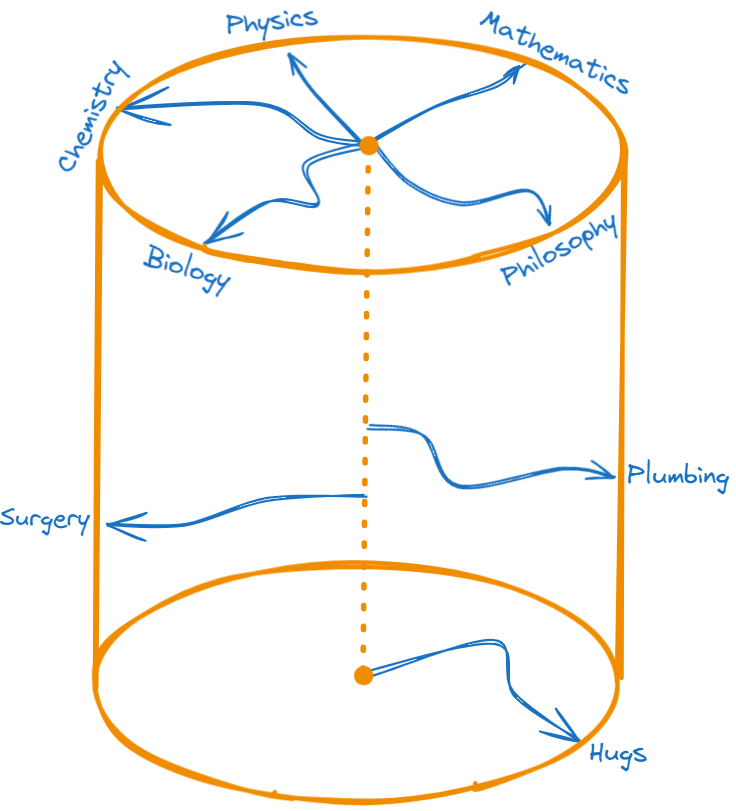

Machines Hugs and New Dimensions

The knowledge encoded in text on the internet is only a tiny slice of all of human knowledge. A plumber’s skills, a ballet dancer’s instincts, or a surgeon’s precise touch can’t be captured in words alone.

The next step is to increase the dimensionality of the data we’re gathering. This is where I see robots coming in: by embodying AI, we’ll expand data collection to the full bandwidth of human experience—audio, video, and touch. I want AIs that can give you a good hug.

To get there, I see three key requirements: robots, uncertainty quantification, and rapid adaptation.

Let’s start with robots. If we want AI to truly understand the real world, it has to operate in the real world. That means we need robots that can safely and reliably interact with humans and the environment.

Obvious challenges include programming and building these robots. But a problem I don’t think gets talked about enough is maintenance. Robots have moving parts, and moving parts break.

If we want robots to exist in the real world at scale, we need the equivalent of gas stations, mechanics, and roads. I anticipate supply chains will be a major bottleneck—possibly the factor that makes or breaks the widespread adoption of highly capable robots. This issue is particularly pressing as we move away from globalization, and US robotics manufacturers increasingly rely on homegrown robotic parts.

Onto uncertainty quantification. If we want robots to learn fast, they’re gonna have to reason about what they don’t know and what’s worth finding out. This is why my recent research has focused on expanding dual control theory (uncertainty aware decision-making) to work with neural networks. I want to come up with scalable ways of 1) quantifying robot uncertainty and 2) doing something about it.

There are also interesting problems related to self-reference. I think it is likely that robots will need to reason about how they learn if we want to maximize how efficiently they gather and learn new information. In practice this means neural networks reasoning about neural networks and self-reference is a fast-lane to Paradox World, e.g., “This sentence is false.”

Finally, rapid adaptation. Humans literally change the way their neurons fire as they learn new things. Robots will need to do something similar. Once they determine what’s worth learning (e.g., “It seems like my human would be very happy if I could skateboard”) and take actions to learn it (“I’ll go to the skatepark”), they’ll need to retrain their internal models with the new information. But retraining a neural network from scratch every time would simply be too expensive. And doing this in real-time? Forget it. For reference, ChatGPT took several months and millions of dollars to train. This is why my recent research has also focused on scalable methods for quickly adapting neural networks with new observations.

My vision is a world with AIs that 1) are out there walking with us 2) are curious 3) learn fast.

Skin in the Game

Given what I just told you, this is where I’m putting my money:

- Education-oriented AI companies.

- Robotics companies that have experience with supply chain management.

- Compute (duh).

Most of the companies doing this kind of work are private, but if you do your homework, you can find a couple of good ETFs. 😉

In terms of time and energy, I’ll be focusing my research on the following topics:

- Uncertainty quantification for neural-network-based decision-making (both philosophical and practical methods).

- Active uncertainty reduction, e.g., “Now that I know what I don’t know, what actions should I take to figure it out?”

- Fast adaptation techniques, so robots can learn in real time.

I’ll also be spending a lot of time just becoming a better engineer. At the end of the day, robots are fucking hard, and they’re way more than just being good at some tiny slice of math or computer science. Robots are at the intersection of electronics, computer architecture, probability, dynamical systems, manufacturing, optimization, material sciences, and even philosophy. You may not need to be good at all of them to be a good robot engineer (although that wouldn’t hurt either), but you do need the ability to debug multi-dimensional problems.

Thanks for reading and don’t forget: Fortitudine Vincimus

Footnotes

-

Or it won’t take off by itself, at least. But maybe it will, if having personal AI mentors unlocks everyone’s creative potential. ↩